Noise Turns Benchmarks Into Subfields: Why Robustness Is a Field Contract

Math Machine: Noise-Corridor Subfield Collapse Machine

License: CC BY 4.0

Source: https://arxiv.org/abs/2602.11348

Facts

On February 11, 2026, the source introduces AgentNoiseBench, a framework that evaluates tool-using agents under noisy conditions by injecting controllable noise into existing agent benchmarks while keeping tasks solvable. It distinguishes two noise families—user-noise (ambiguity and variability in user interactions) and tool-noise (failures, inconsistent responses, partial results)—and reports that model performance varies consistently across noise conditions, indicating sensitivity to realistic perturbations; exact model counts and detailed quantitative outcomes are not specified publicly in the abstract.

What we add / What’s new

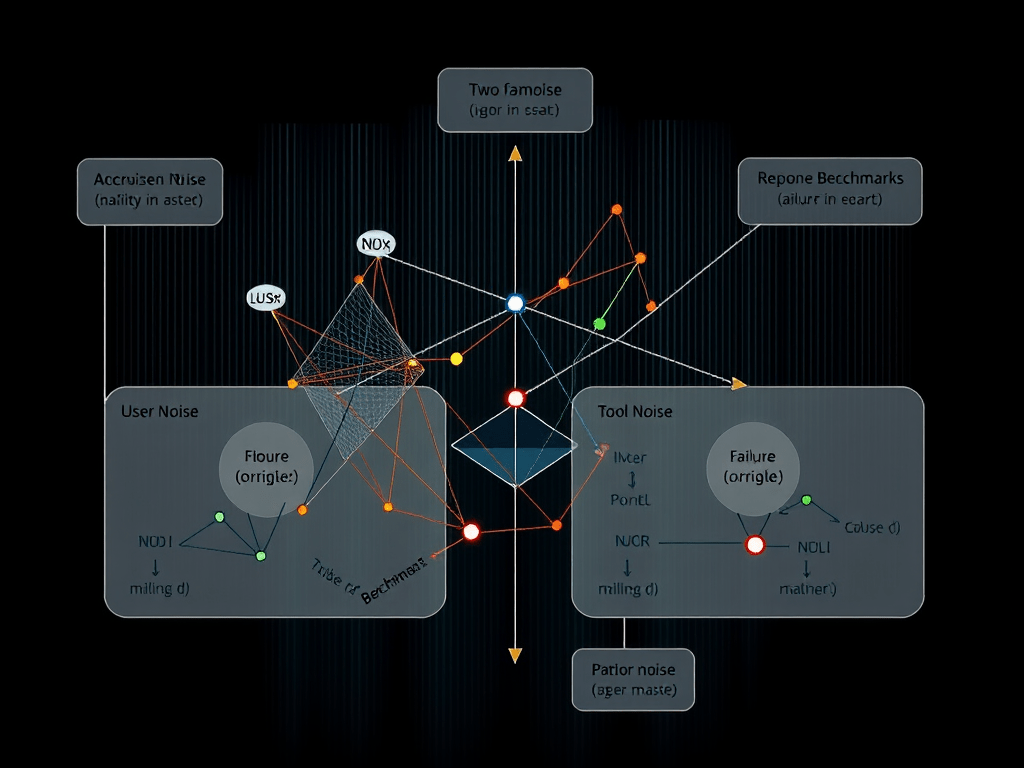

Noise is not “just realism.” It is a regime axis that induces evaluation subfields: the same task becomes different measurable slices once the contract declares how ambiguity, tool failure, and partial observability are treated. Under the LLF lens, that is the field contract becoming legible through instrumentation. [1]–[5]

This is also a collapse-style signal: pooled means can look stable while semantic integrity fails in the tails—the high-noise corridors where partial tool results and ambiguous user intent concentrate false closure. Robustness is not a single number; it is a map of where completion collapses first. [1], [3], [4], [8]–[10]

AgentNoiseBench is therefore best read as a “subfield factory”: it creates declared slices (noise corridors) that should be reported with worst-subfield + dispersion + flip-points, otherwise it can still reward systems that look good on average while failing in the operational slices that dominate risk. [1], [2], [4], [6], [10]

Why it matters

In production, agents do not operate in idealized conversations or perfectly reliable tools. Ambiguous requests, partial failures, inconsistent tool outputs, and interruption are normal. If evaluation ignores these regimes—or compresses them into a single pooled score—teams can ship with false confidence, because the failure modes that cause incidents live exactly in the noisy slices.

Hypotheses

H1 — Noise corridors will act as contract-induced subfields where worst-subfield performance predicts deployment failures better than pooled means. [1] Falsifier: show pooled mean predicts operational failure rates as well as worst-subfield across declared noise regimes.

H2 — Model ordering will flip across user-noise vs tool-noise corridors, making “one leaderboard” non-portable across operational environments. [2] Falsifier: show stable model ranking across multiple noise corridors with low dispersion and no meaningful flips.

H3 — False closure under noise will be explained primarily by missing or weak receipts under budget, not by lack of model capability, because partial tool results can masquerade as completion. [3] Falsifier: show receipt completeness remains high under noise and does not explain false closure rates under audit sampling.

Where it flips (regimes)

Conclusions invert across: (1) user-noise vs tool-noise, (2) low-noise vs high-noise corridors, (3) deterministic tools vs stochastic/partial-failure tools, and (4) short single-step tasks vs multi-step workflows where partial updates can look like “done.”

Math behind it (without math)

A pooled mean is a compression: it throws away the geometry of failure. When failure concentrates in tails (high-noise corridors), averages become misleading—systems can “improve” while reliability collapses in the slices that matter operationally. Contract-explicit subfields preserve the structure: they reveal dispersion, worst slices, and regime flips, which are the signals authorization decisions actually need. [1], [2], [4], [10]

Closure target

This case is “settled” when the benchmark can be expressed as a promotion-grade field card and reported accordingly: (a) explicit noise-regime declarations as first-class subfields, (b) worst-subfield + dispersion as primary outputs (not pooled means), (c) regime-flip maps that identify where ordering inverts, and (d) receipt completeness under audit sampling within a declared verification budget, showing these signals predict operational failures better than averages. [1]–[4], [10]

References

[1] R. Figurelli, “Benchmark Convergence As Operational Confirmation Of Large Language Fields (LLFs),” preprint, 2026.

[2] R. Figurelli, “Large Language Fields (LLFs): The Invisible Layer Above LLMs,” preprint, 2025.

[3] R. Figurelli, “Field Definition Language (FDL): A Proposal to Evolve APIs into Governed Fields,” preprint, 2025.

[4] R. Wang et al., “AgentNoiseBench: Benchmarking Robustness of Tool-Using LLM Agents Under Noisy Condition,” preprint, 2026.

[5] H. M. Pysklo, A. Zhuravel, and P. D. Watson, “Agent-Diff: Benchmarking LLM Agents on Enterprise API Tasks via Code Execution with State-Diff-Based Evaluation,” preprint, 2026.

[6] C. E. Jimenez et al., “SWE-bench: Can Language Models Resolve Real-World GitHub Issues?,” preprint, 2023.

[7] F. F. Xu et al., “TheAgentCompany: Benchmarking LLM Agents on Consequential Real World Tasks,” preprint, 2024.

[8] N. F. Liu et al., “Lost in the Middle: How Language Models Use Long Contexts,” preprint, 2023.

[9] C.-P. Hsieh et al., “RULER: What’s the Real Context Size of Your Long-Context Language Models?,” preprint, 2024.

[10] S. Yao et al., “ReAct: Synergizing Reasoning and Acting in Language Models,” preprint, 2022.